Lore

Robots Weekly ?: Weapons of Math Destruction

A book notes edition of Robots Weekly from my recent read of Cathy O’Neil‘s Weapons of Math Destruction. Like Cliff Notes, except it won’t help you b.s. your way through class.

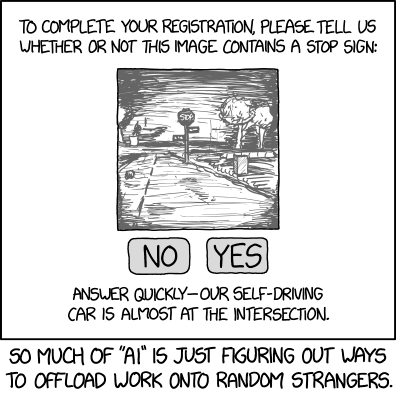

Light Hearted Moment ?

The above is to offset a little of the doom-and-gloom that might follow. Also, it fits pretty well.

What is a weapon of math destruction? ?

It’s basically an algorithm that utilizes Big Data at scale to cause harm, whether on purpose or by accident.

This includes things like teacher scoring based on test results (shout out to the cheaters!) and Facebook’s ad targeting/news feed capabilities to allow fraud or manipulation (hey there President Orangeface).

WMDs have 3 characteristics, they’re: opaque, unregulated, and uncontestable

In other words, they’re black boxes. Like AI. ⬛

No one really knows what the algorithms are doing and there aren’t any feedback mechanisms to allow them to learn.

The Atlantic recently published an article about The Coming Software Apocalypse that touches on a similar problem.

“The programmer, staring at a page of text, was abstracted from whatever it was they were actually making.”

Basically, code is so complex now that people really don’t know what it does anymore. <?>

Full disclosure: I could not finish this article, it just kept going.

I thought WMD raised some good points and hopefully sparks conversation on a topic that needs some attention, but ultimately felt (like a lot of business/business-adjacent books) that it could have been a lot shorter. A lot of good examples, but I don’t think they were all needed to make the point.

Here are some passages I noted down ? :

- Models are, by their very nature, simplifications.

- To create a model we make choices about what’s important enough to include, simplifying the world into a toy version that can be easily understood and from which we can infer important facts and actions.

- Models, despite their reputation for impartiality, reflect goals and ideology.

- Models are opinions embedded in mathematics.

My takeaway on all these: models aren’t the whole picture and are only as good as the person/people creating them. Computers aren’t biased but humans are, and we can hardcode those biases into computers.

- A machine learning program will often require millions or billions of data points to create it statistical models of cause and effect.

They call it BIG data for a reason.

- We humans each have a sense for when an idea becomes an established fact and know when it has been debunked or discarded. However, that distinction flummoxes even the most sophisticated AI.

Again, computers don’t understand concepts. They understand data and the underlying patterns. The algorithms don’t know when they’ve made a mistake by ethical standards so they’ll never tell you your zip code targeting might be racist or facial recognition might be sexist/bigoted.

- You can imagine how machine-learning systems fed by different streams of behavioral data will be soon placing us not just into one tribe but into hundreds of them, even thousands. Certain tribes will respond to similar ads.

I can image this, because I am pretty sure this is how a lot of digital advertising works. This is how that Facebook anti-Semitic targeting snafu happened. The machines looked at data, found some patterns, and. wham, let’s target some Nazis.

- With political messaging, as with most WMDs, the heart of the problem is almost always the objective. Change that objective from leeching off people to helping them, and a WMD is disarmed – and can even become a force for good.

Enough with the fear mongering, it all comes back to us humans (as terrifying as that might be). The computers, algorithms, models, robots are Switzerland (for now… I, for one, welcome out robot overlords), they just tell us what the data says based on the parameters we feed them.

We can’t just say “the model said so” and be absolved of responsibility for anything bad that happens. Ultimately, these things are only as good as us.