Lore

Robots Weekly ?: Back(prop) to the Future

I’ve been doing some learning and a lot of reading about Artificial Intelligence, Machine Learning, Neural Networks, Deep Learning, and all those other robot-y buzzwords lately. Since I know some of the other Blue Ioners are interested in this stuff I started collecting the stories I read, writing a little context – my opinion, synopsis, quotes I liked, etc. – around them, using a lot of emojis, and sharing them via email. Because who doesn’t love unsolicited emails full of links and emojis in their inbox? They thought I should put it on the blog for all you lovely people to enjoy.

So, what are we talking about this week?

Backpropagation ?

Say what now? ?

Backpropagation is deep learning’s secret sauce. It is used in neural networks, which are the things that are supposed to be computer recreations of the human brain, to help it learn.

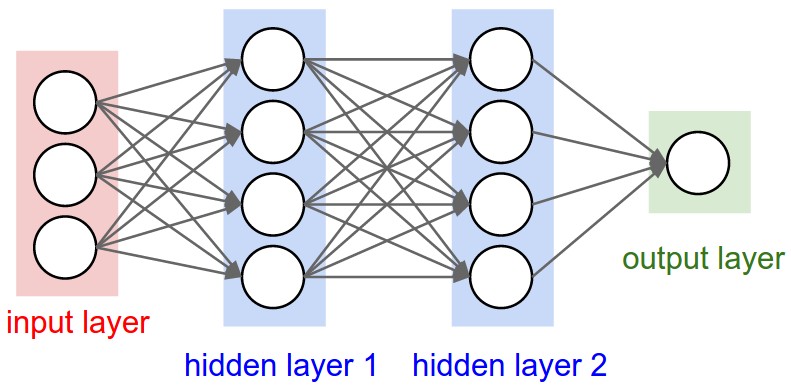

When you run a neural network you start at the beginning – the input layer – and move through the network – the hidden layers – until you get your output (layer), but that output isn’t always right. When it wasn’t right you probably had to do a lot of work to figure out where it went wrong and why, yada yada.

But then backpropagation came along.

Each of those arrows in the neural network above have a different weight. That weight is a reflection of how strongly the previous neuron or node feels about it’s answer. The stronger the feels, the higher the weight.

Backpropagation is an added step of running through the neural network backwards (get it? backwards, backpropagation…never mind) and reweighting the connections between neurons. If the output is incorrect – let’s say this is image recognition and it said “hot dog” when the right answer was “not hot dog” – then the backpropagataion step follows the trail backwards and lowers the weight of the connections that led to the “hot dog”. ?

In other words, your neural network just learned! ?

Backpropagation was the brainchild of one Geoffrey Hinton back in the ’80s, but it just hit the mainstream relatively recently. (Partially thanks to Moore’s Law)

Hawt 4 Hinton ?

Is AI Riding a One-Trick Pony? ?

A look at backprop (that’s what its friends call it ) and Geoff (that’s what his friends call him, I’m assuming) a.k.a. the guy who invented/discovered it; or, how deep learning became a thing. (Probably a better description that what I hacked out above.)

Plus a some reminders that we aren’t at T-1000 yet:

- “Neural nets are just thoughtless fuzzy pattern recognizers”

- “A real intelligence doesn’t break when you slightly change the requirements of the problem it’s trying to solve.”

But wait, there’s more!

Artificial intelligence pioneer says we need to start over ?

I guess the headline “Guy that invented backprop thinks we need to get rid of backprop” didn’t make the cut.

But yeah, our friend Geoff says it’s time to trash Backprop and start fresh. Talk about kill your darlings.

Something To Keep You Up At Night ?

China ?? wants to be the world leader in AI by 2030. And it could probably happen.

1 reason why: “a large amount of data makes a large amount of difference” and China has plenty of data

But it’s probably not as scary as it sounds, and we can definitely learn from it. Read about China’s AI Awakening.